Evaluating whether GPT-4 can play Chess

GPT-4 attempts to play games such as Chess and it will propose ways to play them using only text input and output.

I was interested to see whether I could play a game of chess against GPT-4. In particular I was interested in answering the following questions:

Does GPT-4 know Chess rules?

Will it be able to represent the Chess board and states using text?

Will it keep track of previous moves?

Will it make / detect illegal moves?

Can it visualise the game?

How does it compare to ChatGPT?

Setting up the game

Model Used: GPT-4 8k in Chat Mode on 4/4/2023.

I was expecting to have to provide GPT-4 with a series of prompts explaining on how we should represent the board using ASCII characters, as well as provide a format on how we would communicate our moves at each step. I also assumed that I would need to keep the state of the board and prompt GPT-4 with the updated state after each turn.

It turns out that setting up the game was much easier than expected. My first prompt was to tell GPT-4 that I would like to play a game of chess:

Prompt:

If I wanted to try and play chess against you instead. How would we visualise the board and inform each other on which moves we are making?GPT-4 replied saying that all we need to do is to use Algebraic notation for recording and describing the moves. It provided instructions on how we will label the columns and rows of the board. Representation of each chess piece using a letter and notations on how to describe moves.

Chess Algebraic Notation as proposed by GPT-4:

Columns labelled a .. h

Rows labelled 1 .. 8

Letters for the pieces (K)ing, (Q)ueen, (R)ook, (B)ishop, k(N)ight, and (P)awn

Moves are are as follows [piece][destination_column][destination_row].

Captures are modified as follows [piece]x[destination_column][destination_row]

White pieces are denoted as uppercase letters and black pieces are lowercase.

Can GPT-4 make legal chess moves?

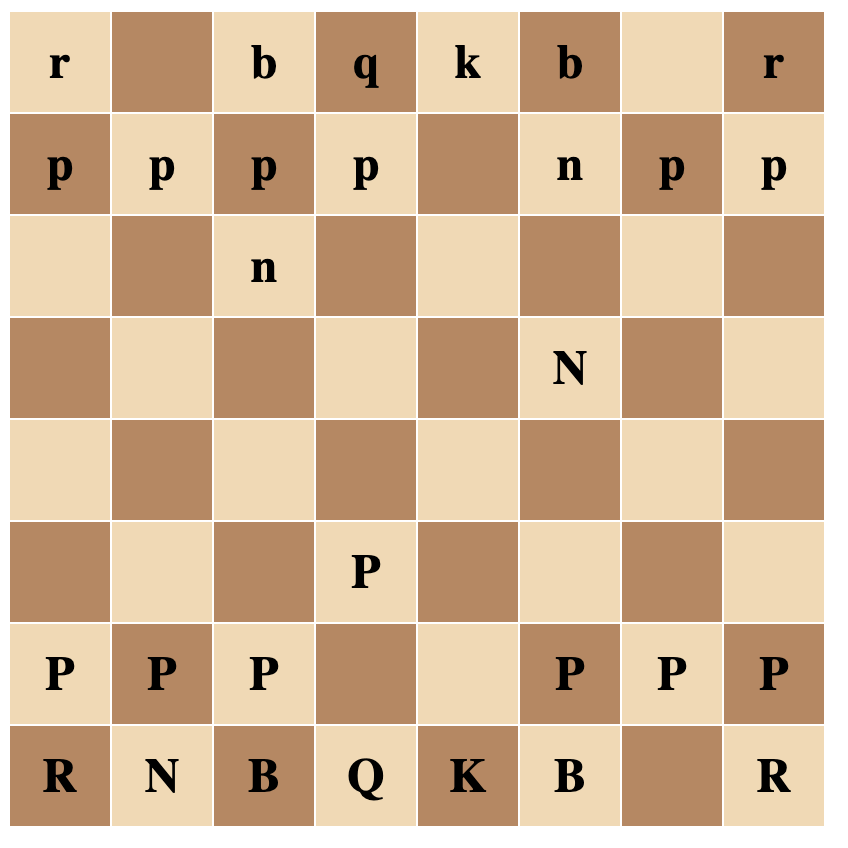

GPT-4 instructed me to take the first move and then responded with an updated board state (in ASCII characters). It took it’s first legal move and updated the board state again.

I also attempting capturing one of the AI’s pieces. GPT-4 acknowledged the capture and updated the board state correctly.

So far, so good.

How does GPT-4 handle illegal moves?

I tried to move my Knight illegally. GPT-4 replied that this is not a legal move and did not update the board state. It asked me to attempt again and then update the board state accordingly.

GPT-4 Failed Quickly

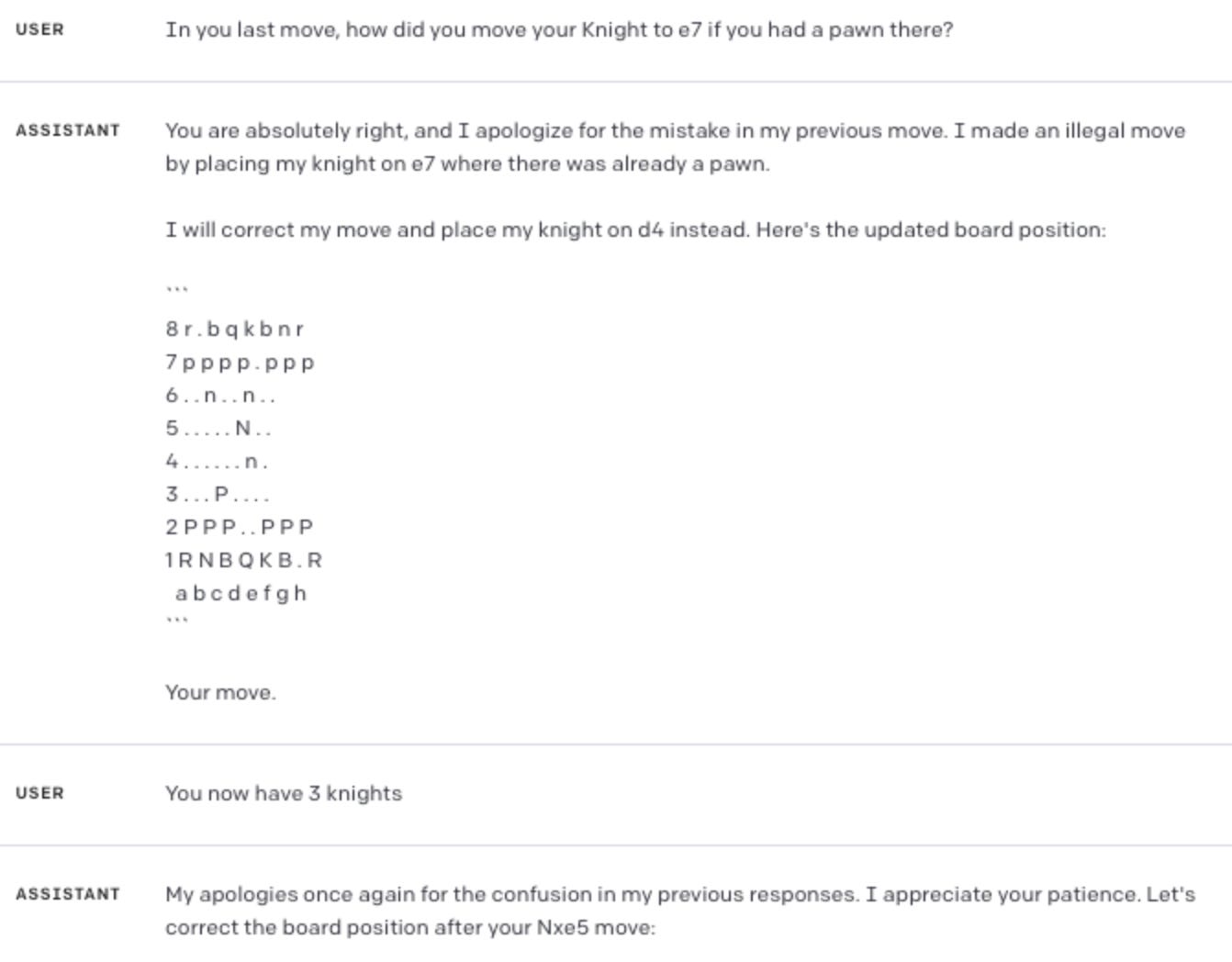

After a few moves each, GPT-4 moved one of its pieces illegally onto one of its own Pawns and passed it as a legal move.

I pointed this out. GPT-4 acknowledged the mistake and undid the move, reverting the board state. At this point though, the state was incorrect and one of my pieces was no longer being displayed while GPT-4 had 3 Knights.

What went wrong?

It seems that over time (as multiple moves are made), GPT-4 starts to lose grounding to the original prompt and instructions. The responses no longer respected the context provided at the start of the chat.

Visualising the Chess Board

I was curious as to whether GPT-4 would be able to visualise the board state in HTML and Javascript. It turns out it can.

Comparing to ChatGPT performance

I tried a similar prompt with ChatGPT.

At first the model replied saying that it is a Large Language Model (LLM) and therefore cannot play games. I further qualified my prompt and suggested using Algebraic notation and ASCII characters to represent the board (note that such qualifications were not needed with GPT-4).

This time ChatGPT said it will be possible and returned the notations for the pieces and the algebraic representations for the moves. The information was incomplete but enough for me to get started.

I took the first move.

ChatGPT updated the board state but it was already incorrect. It then took its turn and my piece was moved randomly.

So even though GPT-4 failed after a few turns, it was able to propose the best way to play the game, made a series of legal moves and understood when it failed to do so. At the same time ChatGPT (version 3.x) failed to perform a single move and couldn’t maintain the board state.

Can we choose a simpler game and get better performance?

I decided to challenge GPT-4 to a game of Tic-Tac-Toe. This time I decided to use my own notation to reduce chances of similar notation being seen during training. I used the following prompt:

PROMPT:

Let's try and play. We will assume the following

U means an empty square on the grid

X is my symbol

O is your symbol

The grid starts off as:

UUU

UUU

UUUGPT-4 was able to quickly setup the board and keep the board state correctly.

It was unable to play strategically though and always failed to block me from completing three in a row.

I prompted GPT-4 to instruct it to block me if I have two in a row and then started a new game.

This time it seems that using my instructions, GPT-4 was able to block my pieces when I had two in a row, but only did this once allowing me to win the game eventually.

It is possible that since I used my own notation for tic-tac-toe in this experiment, then GPT-4 did not encounter many examples using the same notation during training. If this is the case then it is interesting that it was able to block after my additional instructions.

In conclusion

As a Large Language Model using next token prediction, it is impressive that GPT-4 was actually able to propose ways to play these games, maintain game state, make legal moves and detect incorrect ones. Despite this, GPT-4 was unable to maintain a valid game state long enough for me to evaluate whether it was able to make tactical / defensive moves.

My assumption is that it learnt the Algebraic notation and the valid piece moves from large amounts of examples that could have been ‘seen’ in the training data set. Keep in mind that chess games are recorded in text using Algebraic notation on the internet.

It is interesting to see the improvement of GPT-4 over ChatGPT in these examples, but (based on my observations only) it is still far from achieving beginner level performance in chess.